The Illustrated BERT, ELMo, and co. (How NLP Cracked Transfer Learning) – Jay Alammar – Visualizing machine learning one concept at a time.

Combining BERT with Static Word Embedding for Categorizing Social Media | Research Paper Walkthrough - YouTube

Figure presents the accuracy of amazon 20 products using gcForest on... | Download Scientific Diagram

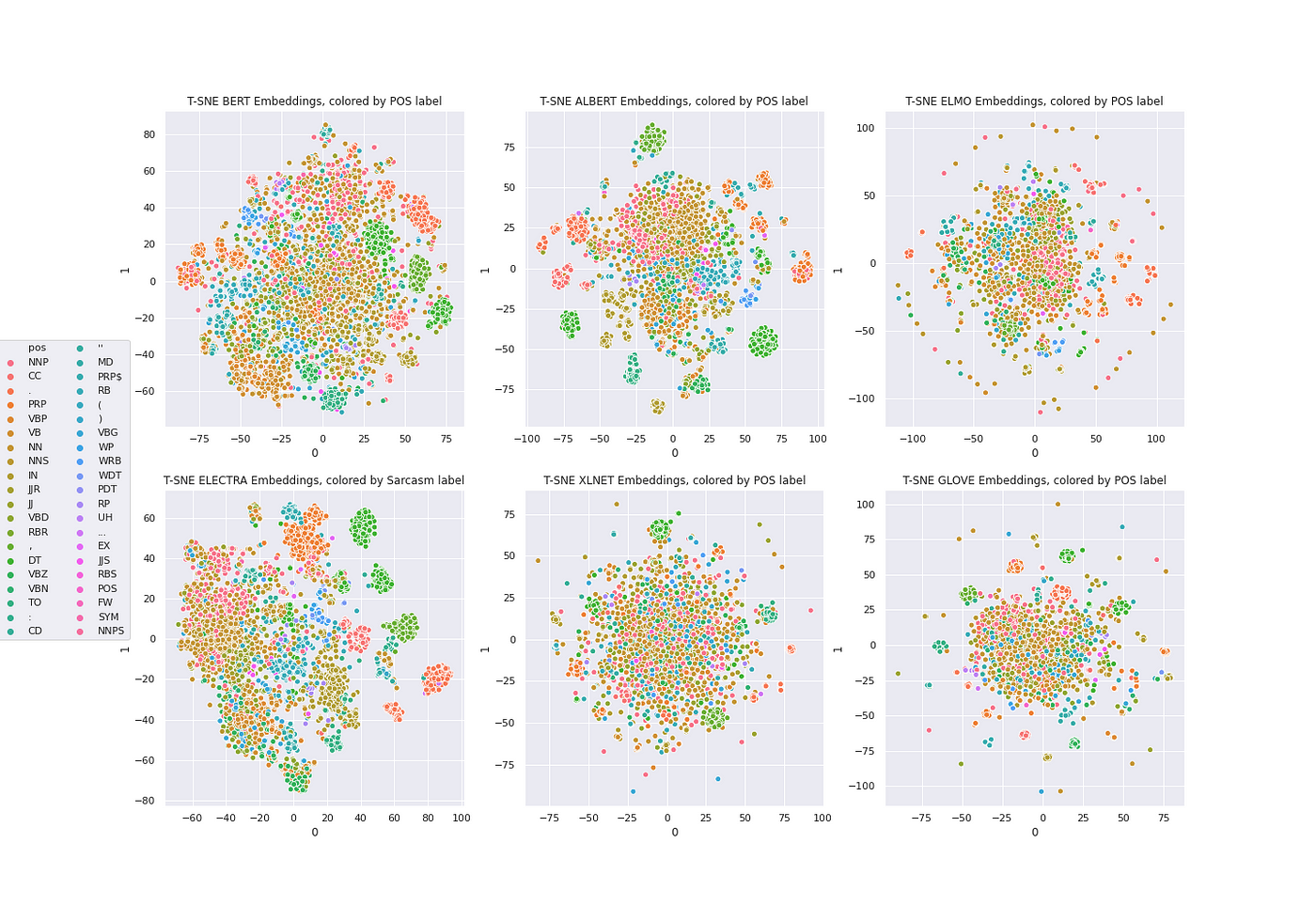

1 line of Python code for BERT, ALBERT, ELMO, ELECTRA, XLNET, GLOVE, Part of Speech with NLU and t-SNE | by Christian Kasim Loan | spark-nlp | Medium

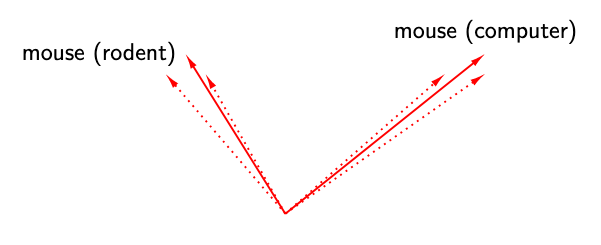

GitHub - bhattbhavesh91/word2vec-vs-bert: I'll show how BERT models being context dependent are superior over word2vec, Glove models which are context-independent.

FakeBERT: Fake news detection in social media with a BERT-based deep learning approach | SpringerLink

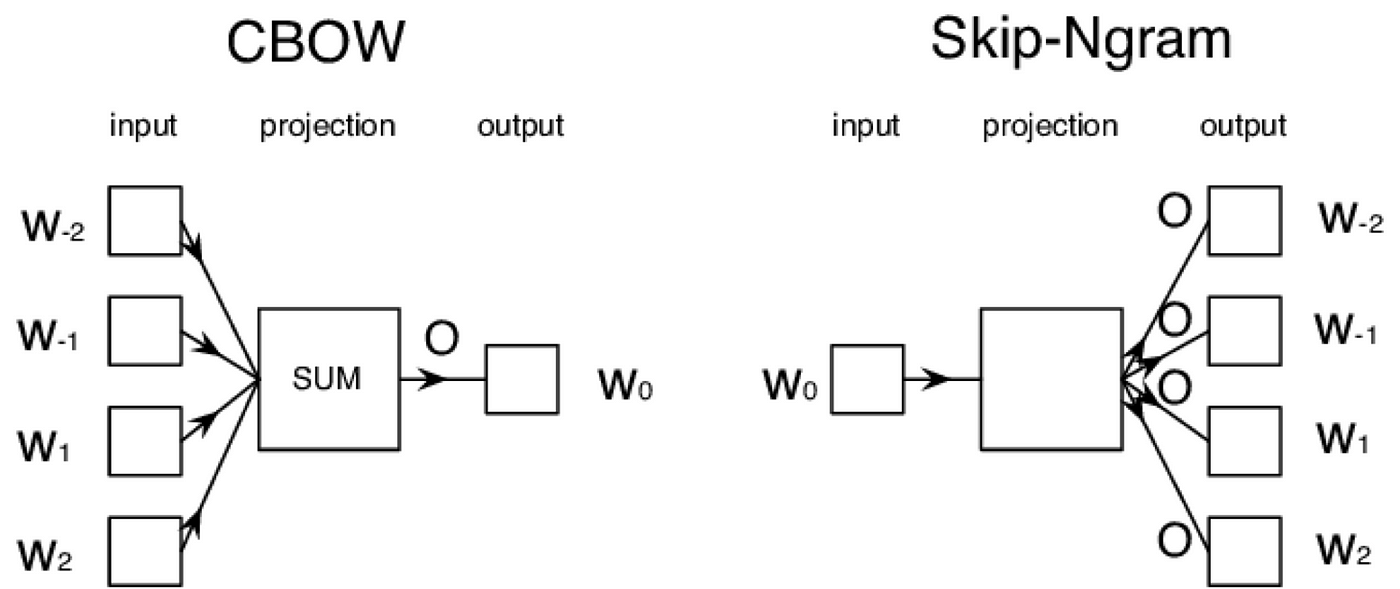

Text Classification with NLP: Tf-Idf vs Word2Vec vs BERT | by Mauro Di Pietro | Towards Data Science

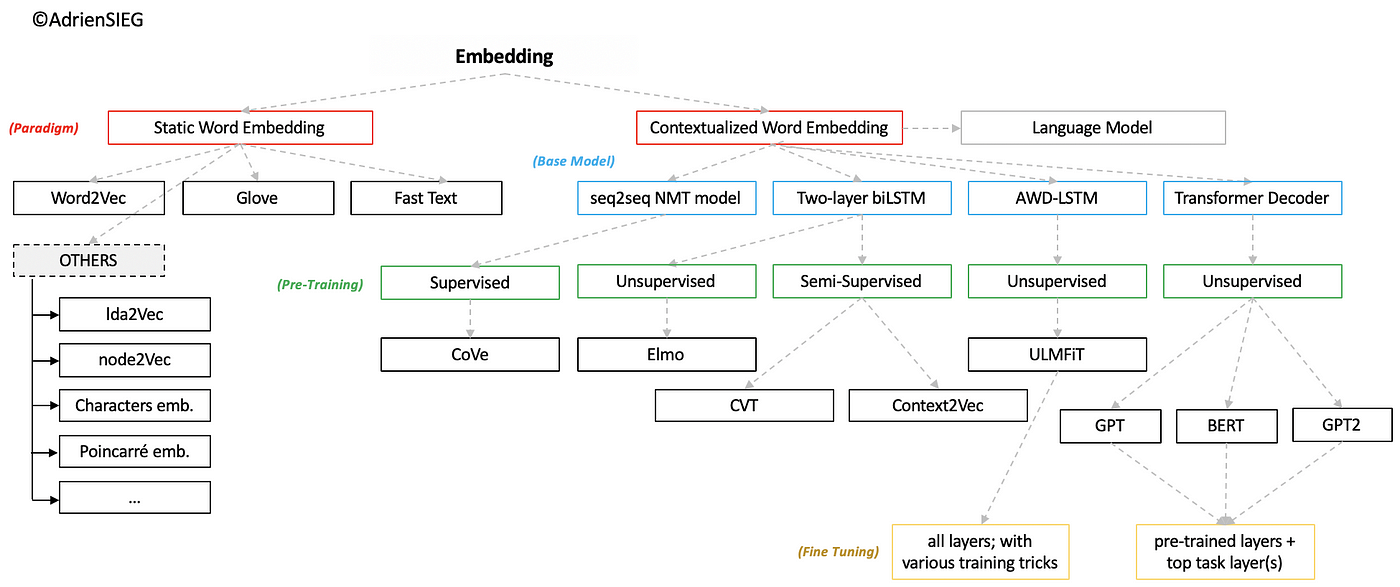

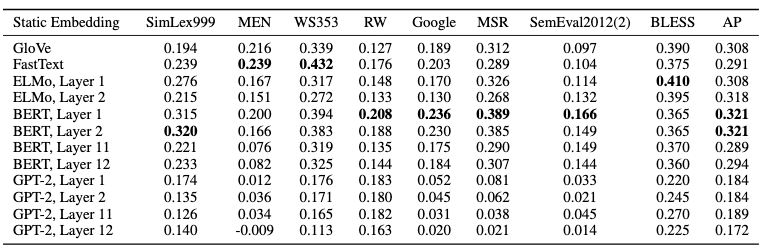

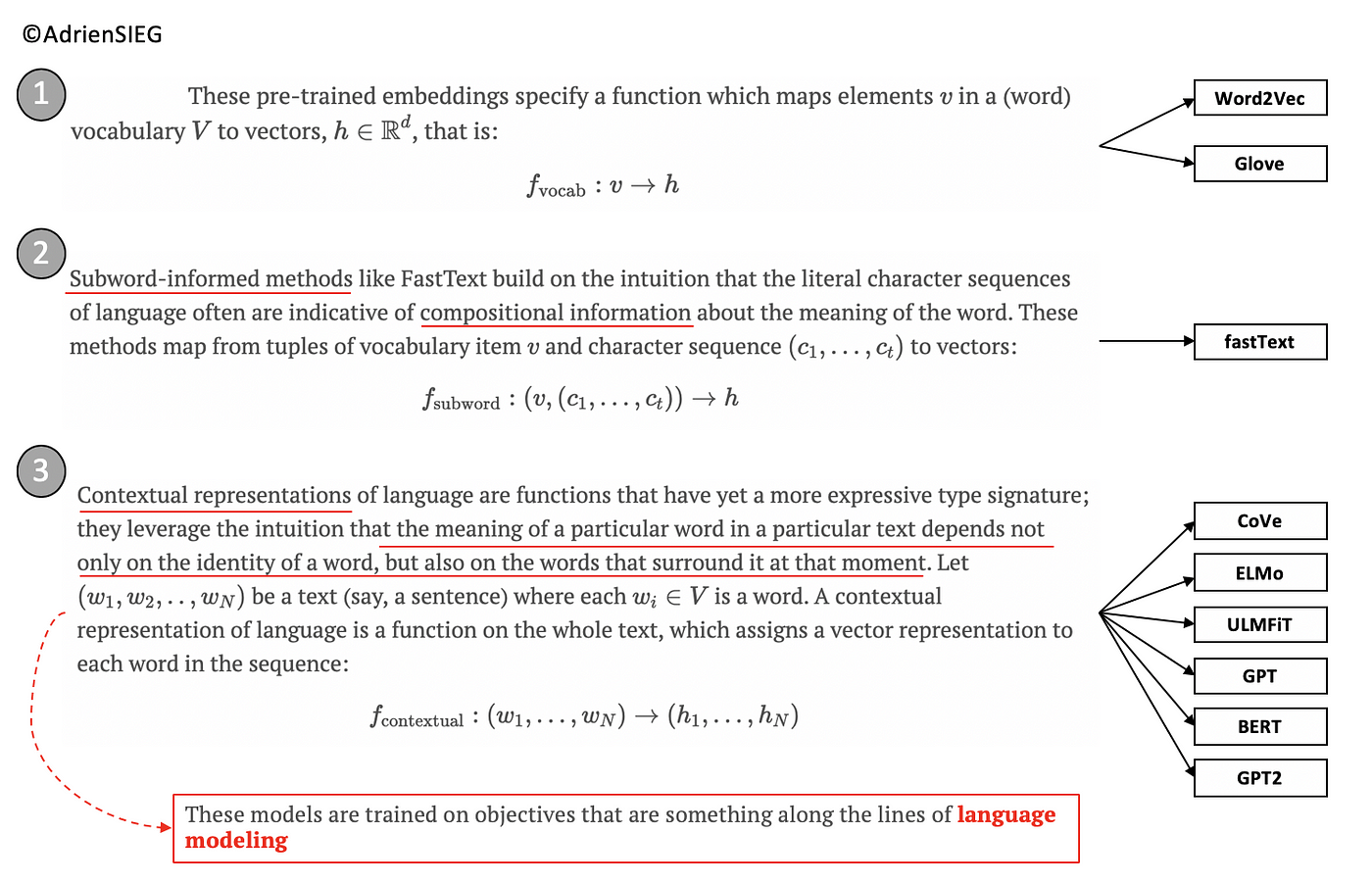

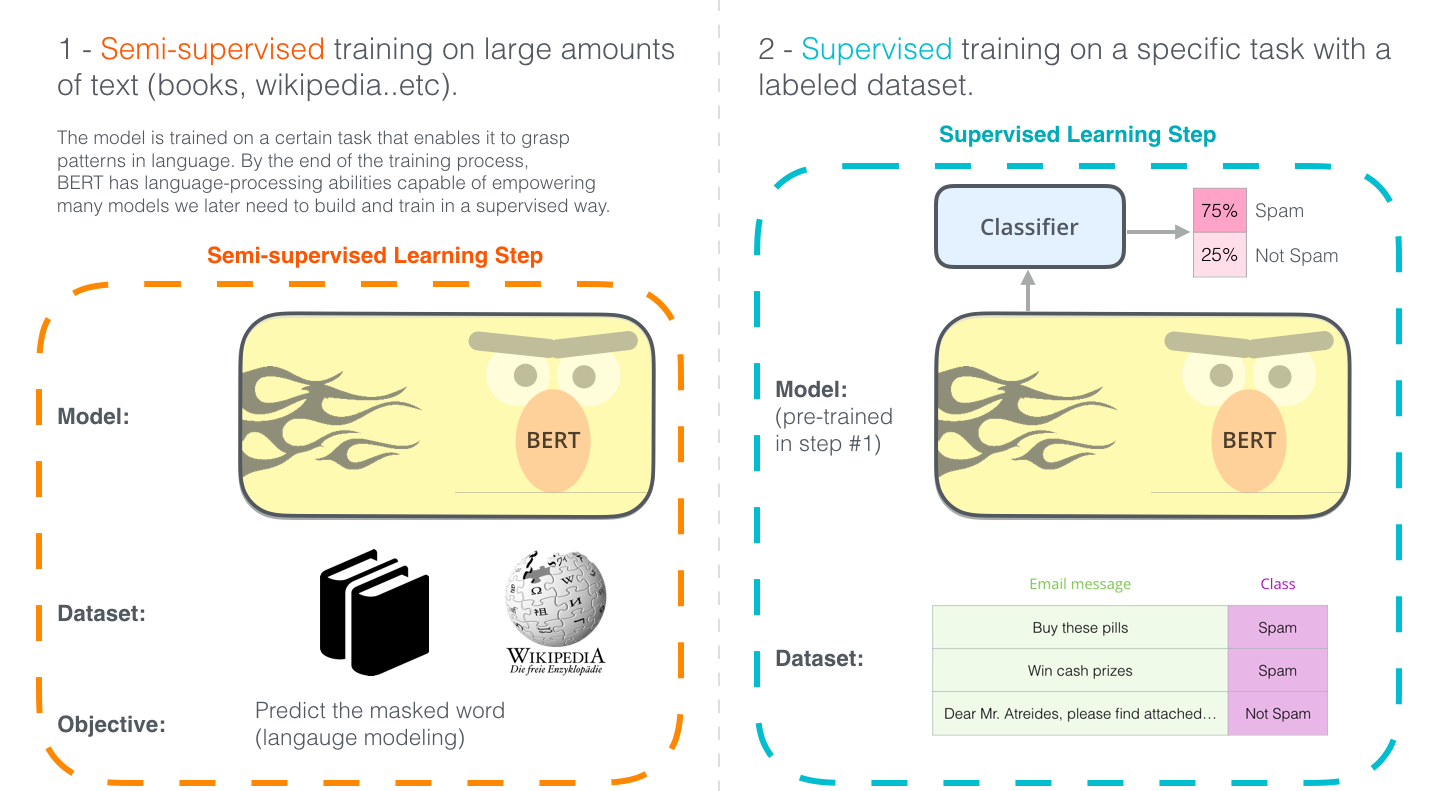

FROM Pre-trained Word Embeddings TO Pre-trained Language Models — Focus on BERT | by Adrien Sieg | Towards Data Science

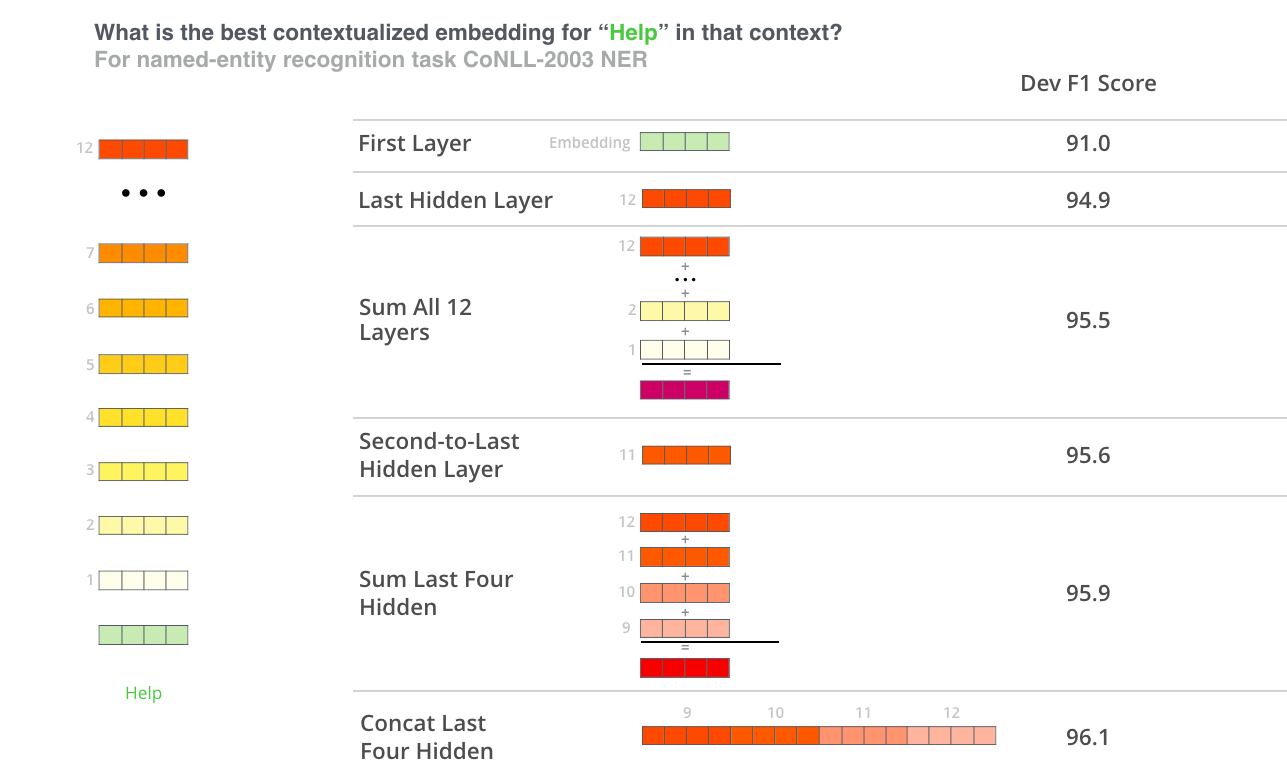

The Illustrated BERT, ELMo, and co. (How NLP Cracked Transfer Learning) – Jay Alammar – Visualizing machine learning one concept at a time.

16.7. Natural Language Inference: Fine-Tuning BERT — Dive into Deep Learning 1.0.0-beta0 documentation

FROM Pre-trained Word Embeddings TO Pre-trained Language Models — Focus on BERT | by Adrien Sieg | Towards Data Science

Word embeddings for biomedical natural language processing: A survey - Chiu - 2020 - Language and Linguistics Compass - Wiley Online Library

The Illustrated BERT, ELMo, and co. (How NLP Cracked Transfer Learning) – Jay Alammar – Visualizing machine learning one concept at a time.

What are the main differences between the word embeddings of ELMo, BERT, Word2vec, and GloVe? - Quora

What are the main differences between the word embeddings of ELMo, BERT, Word2vec, and GloVe? - Quora